WiSci does Conferences

For winter quarter, we’ve been discussing practical advice, sharing the wealth of knowledge of our community. For this meeting, Emily Delaney and Kristin Lee led a discussion on what we should be thinking about when preparing for conferences, and the mechanics of actually presenting.

Conferences are a great opportunity to increase the visibility and exposure of your work, a chance to network and collaborate, and in particular, symposia at conferences bring together leaders and rising stars interested in similar topics. But we want to be aware of the biases that can lead to who is speaking. Emily shared research that shows there are differences in the gender ratio of speakers, even at gender-balanced conferences. Women are underrepresented as speakers – even though only 9-23% of symposia talk invites went to women in data compiled from a decade of meetings of the European Society for Evolutionary Biology, gender bias was exacerbated because 50% of women declined their invitation, but only 26% of men did. We identified that this may be because the same women are being invited to speak at lots of places, or have responsibilities caring for family and young children, and childcare offered by conferences is of differing quality (if it’s even offered!). Also, imposter syndrome can make individuals less likely to accept an invitation, or consider their invitation simply as a token.

To increase parity in the gender ratios of speakers, societies can act top-down to encourage diversity within their symposia, and we as participants can act to empower our diversity. Including a statement in the instructions for organizing symposia that 50/50 gender balance in speakers is expected, and requiring an additional declaration defending why it could not be achieved is one example. Encouraging the use of self-nomination lists like Diversify EEB, Diversify EEB Grads, AcademiaNet, EMBO Women in Life Sciences can help identify relevant speakers. As participants, we can use our voice to call out offenders (see allmalepanels.tumblr.com for a humorous approach), and in more extreme forms, boycott meetings. Other ideas include when declining an invitation, making suggestions of another person to take your place. It certainly helps with imposter syndrome if the organizer is able to invite with language like “your colleague recommended you.” Overall, we are encouraged that awareness is important in making change – after a paper showing that a major contributor to the amount of female speakers in symposia at the 2014 General Meeting of the American Society of Microbiology was whether the symposium had a female convener, the following year’s meeting did better.

We closed out the session with Kristin’s presentation of ways in which verbal and nonverbal communication can be interpreted by others. I have a hard time recognizing the vocal patterns, but these include uptalk (phrases and sentences ending with a rising sound as if a question) and vocal fry (scratchy low Ira Glass or Noam Chomsky voice). If you’re not sure what these sound like, there are great audio file examples in the supplement here. While these vocal patterns are used by both genders, there are pieces all over the internet that complain about women’s voices as perceived by men.

Our general opinion was concern over yet another thing to worry about – the sound of your voice – but others raised the point that whenever you’re giving a presentation you’re putting on a show. Thinking about the way in which you speak is not that different from considering how you’re dressing or what your slides look like. All are personal choices, and while it really shouldn’t matter, perception by others is a tricky thing and difficult to control. While we didn’t reach a consensus as to whether the impetus should be on the speaker or the listener to change, it is clear that awareness is important. We can police negative comments about speaking styles, even when they’re masked as compliments. Telling a presenter that she has poise and confidence in speaking is insulting when you’re not commending her really amazing research. Instead of worrying about what people sound like, we can try to focus on the content of the words they’re speaking.

Thanks to Emily and Kristin for organizing!

-Michelle Stitzer

Behavioral insights from Kate Glazebrook @ Applied

For the third and final session in our series Diversity and Inclusivity, Kate Glazebrook shared with us her work on unconscious biases that exist during hiring practices and efforts to remove them. Kate is a Principal Advisor (Head of Growth and Equality) at the Behavioural Insights Team, a UK government institution dedicated to the application of behavioral sciences. Kate is also the co-founder of Applied, a service that incorporates leading behavioral science research to remove unconscious bias from hiring practices.

Diverse groups are important for many reasons. They have been shown to process more deeply and prepare more before considering issues. Diverse teams are also more creative, accurate, and less prone to groupthink. In essence, it’s beneficial to have people who think about things differently. Still, twice as many FTSE100 bosses are named John as there are women.

Implicit biases exist within our hiring practices that contribute to this lack of diversity. Unfortunately, there is little evidence to suggest diversity training works. In response to this, Kate shared her work with Applied to remove some of these biases, applying behavioral science research and what we know about how people make decisions and analyze information. Particularly, they have developed technology to remove bias and improve the effectiveness of hiring. It is built around five key features:

- Anonymize: remove all identifiers from candidates material that are irrelevant to job but may affect decision-making.

- Chunk: group candidate applications in signal dimensions. Horizontal review improves objectivity and decreases cognitive load on the reviewer. It is difficult for people to compare things that vary on multiple dimensions and as a default we choose options that are safer (i.e. more familiar or similar to ourselves). Additionally, when reading applications vertically, there exists a halo effect where impressions we form at the beginning affect how we assess information thereafter – a great or terrible first paragraph can disproportionately affect your chances.

- Harness crowd: aggregate input from multiple independent reviewers. Collective wisdom is better than even a singular expert. This approach also allows for having reviewers view batches of candidates responses in different orders. Kate says a team of three independent reviewers is the optimal size for a balance between accuracy in choosing the best candidate and utilizing resources.

- Test what counts: shift assessment from measures on CVs that do not reflect job success to using work sample tests and structured interviews.

- Intelligent feedback: measure what works and build on this feedback.

Kate also shared with us ways language matters during the recruitment process before applicants apply. How diversity is described matters. Saying diversity is important because of issues related to equitability increases the number of ethnic minorities applying. Saying it is important because we value differences does not, perhaps because of fears of being tokenized. Also, gendered language impacts who applies. Words like “helping” and “collaborative” increase the number of female applicants compared to words like “individual”, “drive”, or “competitive.”

Thanks, Kate, for sharing interesting insights from behavioral science research and for chatting with us. You can check out Kate’s TEDx talk here. She also mentioned this book, What Works: Gender Equality by Design by Iris Bohnet, for those interested in learning more.

-Kristin Lee

Winter quarter events scheduled

Our remaining winter quarter events have been scheduled for Tuesdays on Feb. 14th and March 14th from 12:00-1:00 pm in 2342 Storer Hall. We’ll also do a winter quarter happy hour at DeVere’s on Feb. 21st at 5:30 pm.

Hope to see you there!

Our conversation with Lee Bitsóí

Last month, we continued our series on Diversity and Inclusivity by having a discussion on leadership styles with Lee Bitsóí, Directory of Student Diversity and Multicultural Affairs at Rush University Medical Center. Lee joined us over skype, and discussed positive academic leadership and how it can help promote greater diversity in academia.

Most often, leaders employ a traditional style of leadership. Under traditional leadership, the leader focuses on repairing what is wrong, addressing strengths and weaknesses, and reacting to situations. While this type of leadership can be successful, the focus on negative attributes that “need fixing” may not be supportive. In contrast, positive leadership focuses on improving what is right, addressing strengths (but also recognizing weaknesses), and proactively creating healthy environments. Positive leadership can build a sense of hope and optimism, whereas traditional leadership may never achieve this goal. Lee recommended that we adopt positive style of leadership, which has a higher potential to push academia towards greater inclusivity.

One topic that especially struck me during our conversations was the difference between nurturing and coaching. Nurturing includes listening, validating and encouraging students whenever they face a challenge. In contrast, coaching acknowledges the student’s challenge, but focuses on engaging the student to actively find solutions that addresses their concerns. In academia, we are often given the chance to lead when we mentor undergraduate and graduate students. It had never occurred to me before that nurturing a student might not necessarily serve them best. Although subtly different, coaching can enable leaders and mentors to empower students to make their own proactive decisions. In the future, I will try to view my role as a postdoc more as a “coach,” and think about what tools and skills my undergraduates need to achieve their research and career goals.

Spending an hour discussing leadership skills with Lee and learning about his experiences both as a researcher and Director of Student Diversity was immensely valuable in thinking about how to create inclusive and positive environments. I have already started reflecting on my own leadership style and aspire to implement more positive leadership in my current lab and future labs.

The implicit biases in labels

For our first WiSci meeting of Fall 2016, we were lucky to have Didem Sarikaya lead us through examining our explicit and implicit biases.

We first discussed the distinction between explicit and implicit biases. We brainstormed axes of discrimination that people may face and ended up with the following: gender, sex, race, age, religion, orientation, disability, income/class, nationality/region, education, physical appearance, language/dialect/accent, family, and profession. Biases based on these axes can be either explicit or implicit. An easy way to distinguish between explicit and implicit biases is to think about intent: explicit biases involve active thoughts while implicit biases tend to involve a lack of thought. For example, if a landlord directly refused to rent to a dog-owner, that would be an explicit bias. But, if a landlord was open to renting to dog owners but never actually did so because they felt that dog-owners are dirty, that would be implicit. Other examples of implicit bias that we discussed included a lack of accessibility in academic buildings and labs, the way pregnancy might be dealt with during hiring decisions, and gender differences in undergraduate teaching evaluations.

Next, Didem led us through a test for implicit bias. For readers who want to get a sense of how these tests work, you can take them online at https://implicit.harvard.edu/implicit/takeatest.html) We did a classroom version of the test developed by Keith Maddox and Samuel Sommers at Tufts University to detect race-based bias, and we did not do well. It was pretty uncomfortable but it forced us to confront the implicit biases that many of us carry. Afterwards, Didem showed us statistics that show that most Americans who take these types of test show bias along various axes of discrimination.

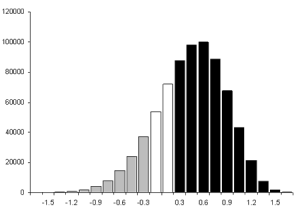

Distribution of scores implicit association test results from 2000 to 2006. Dark bars represent faster response to African American names with unpleasant adjectives and European American names with pleasant adjectives, and gray bars represent faster response to European American names with unpleasant adjectives and African American names with pleasant adjectives (retrieved from: https://implicit.harvard.edu/implicit/demo/background/raceinfo.html).

We turned to thinking about how biases can shape the way we label ourselves and others. We wrote down a few labels that have been applied to us that we did not like. We then took our labels and mingled with each other, discussing the labels that we did not like for ourselves and attempting to trade for labels that we liked better. The labels that I saw included things like “aggressive”, “sweet”, “unapproachable”, “pretty”, and “small”. A theme that emerged was the importance of the context of these types of labels — while a comment like “school-teacher voice” may sound benign, it can actually be fairly hurtful to someone who is trying to develop their “professor voice.” Similarly, words like “nice” and “sweet” can undermine a young scientist’s confidence in their intellectual abilities and feel demeaning.

We ended the workshop by briefly discussing how we could take what we’d learned and use it to make our own communities more inclusive. Suggestions from the group included making an effort to be careful about the language we use when talking to and about others or when writing reference letters and acknowledging when we make mistakes. In addition, we generally agreed that implicit biases are often based on not knowing people from underrepresented groups across various axes of discrimination, so increasing the number of people in our communities from underrepresented groups is essential.

For more information, please refer to Ambika Kamath’s excellent blog post on how to lead workshops on making academia more friendly to underrepresented groups. It served as the main source of inspiration for this session, and is a great resource for anyone interested in organizing similar activities:

— Emily Josephs

Test yourself for implicit biases

Here is a link to some implicit bias tests that Didem mentioned in today’s WiSci session on labels. Definitely worth taking one, especially if you are teaching this quarter!

Fall theme: Diversity and inclusion in academia

This fall, we will hold three sessions on Diversity and Inclusion with two very exciting guest speakers. While we often hear “diversity” being used at events, seminars, and articles in social media, describing what Diversity and Inclusion means is a difficult task for most academics in the life sciences. Our goal is to begin and add to a conversation that will continue as each attendee moves through their career.

- October 20: Blinded by our privileges, hurt by our labels; exploring implicit biases and how they influence our interactions

- November 10: Cross cultural dialogue and building empathy

- December 8: Removing unconscious biases when making hiring decisions

Fall 2016 schedule is here!

It’s time to kick off WiSci’s third year! This fall, our theme will be diversity in the workplace. We’ve lined up some fun speakers and activities – hope to see you at our first meeting!

Click on our ‘Calendar‘ Menu to see each monthly event.